diff --git a/.licenses/npm/@actions/artifact.dep.yml b/.licenses/npm/@actions/artifact.dep.yml

index 5325bd1..33a2153 100644

--- a/.licenses/npm/@actions/artifact.dep.yml

+++ b/.licenses/npm/@actions/artifact.dep.yml

@@ -1,6 +1,6 @@

---

name: "@actions/artifact"

-version: 2.3.2

+version: 2.2.2

type: npm

summary: Actions artifact lib

homepage: https://github.com/actions/toolkit/tree/main/packages/artifact

diff --git a/.vscode/launch.json b/.vscode/launch.json

deleted file mode 100644

index 94965ca..0000000

--- a/.vscode/launch.json

+++ /dev/null

@@ -1,36 +0,0 @@

-{

- "version": "0.2.0",

- "configurations": [

- {

- "type": "node",

- "request": "launch",

- "name": "Debug Jest Tests",

- "program": "${workspaceFolder}/node_modules/jest/bin/jest.js",

- "args": [

- "--runInBand",

- "--testTimeout",

- "10000"

- ],

- "cwd": "${workspaceFolder}",

- "console": "integratedTerminal",

- "internalConsoleOptions": "neverOpen",

- "disableOptimisticBPs": true

- },

- {

- "type": "node",

- "request": "launch",

- "name": "Debug Current Test File",

- "program": "${workspaceFolder}/node_modules/jest/bin/jest.js",

- "args": [

- "--runInBand",

- "--testTimeout",

- "10000",

- "${relativeFile}"

- ],

- "cwd": "${workspaceFolder}",

- "console": "integratedTerminal",

- "internalConsoleOptions": "neverOpen",

- "disableOptimisticBPs": true

- }

- ]

-}

\ No newline at end of file

diff --git a/README.md b/README.md

index 479df99..507f6e1 100644

--- a/README.md

+++ b/README.md

@@ -473,9 +473,8 @@ If you must preserve permissions, you can `tar` all of your files together befor

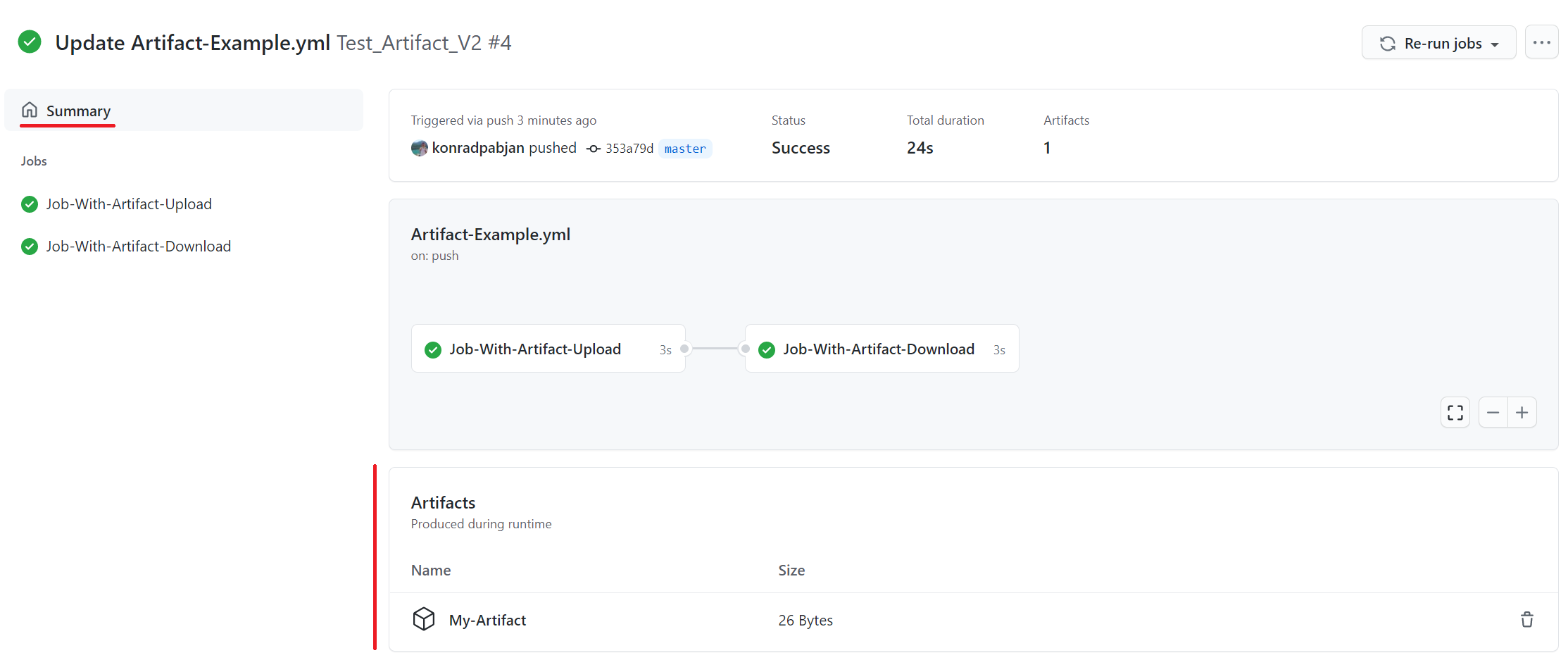

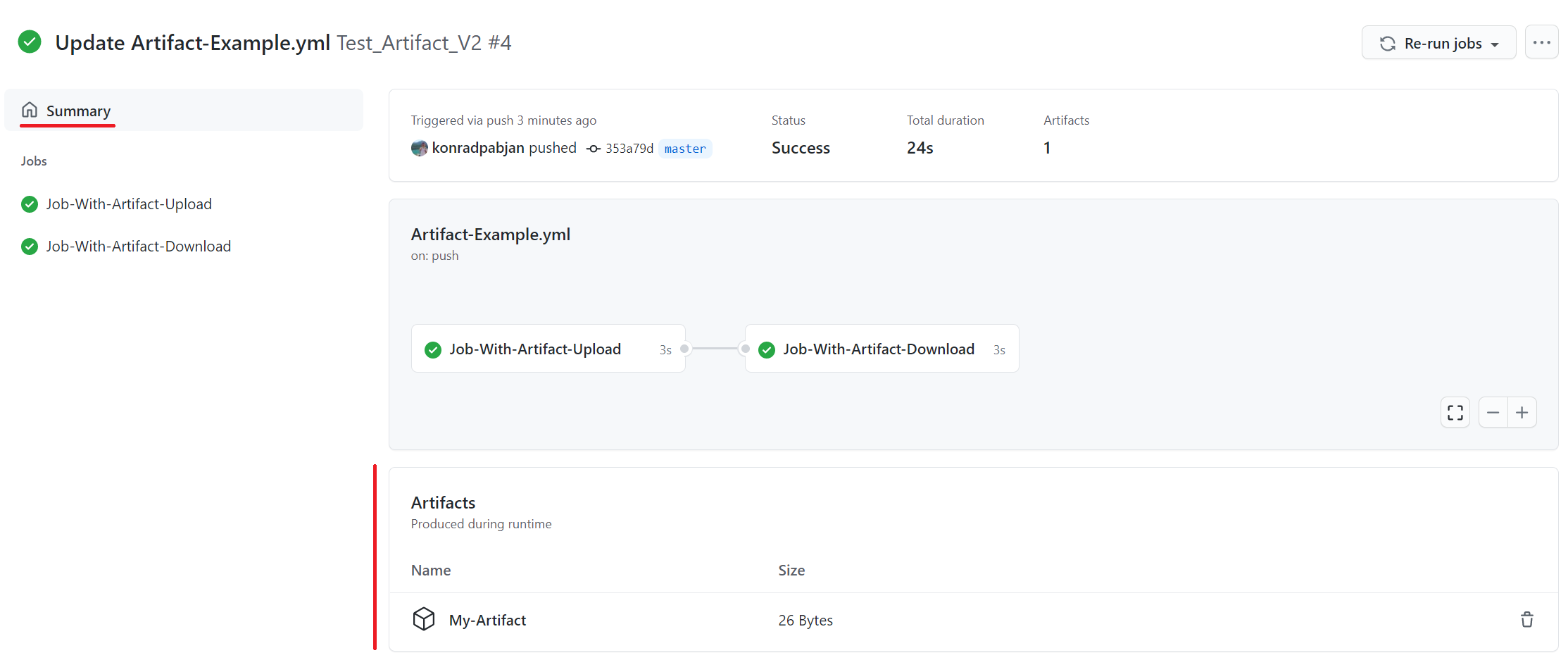

At the bottom of the workflow summary page, there is a dedicated section for artifacts. Here's a screenshot of something you might see:

- -

+

-

+ There is a trashcan icon that can be used to delete the artifact. This icon will only appear for users who have write permissions to the repository.

-The size of the artifact is denoted in bytes. The displayed artifact size denotes the size of the zip that `upload-artifact` creates during upload. The Digest column will display the SHA256 digest of the artifact being uploaded.

+The size of the artifact is denoted in bytes. The displayed artifact size denotes the size of the zip that `upload-artifact` creates during upload.

diff --git a/dist/merge/index.js b/dist/merge/index.js

index eabca8a..31792d4 100644

--- a/dist/merge/index.js

+++ b/dist/merge/index.js

@@ -824,7 +824,7 @@ __exportStar(__nccwpck_require__(49773), exports);

"use strict";

Object.defineProperty(exports, "__esModule", ({ value: true }));

-exports.ArtifactService = exports.DeleteArtifactResponse = exports.DeleteArtifactRequest = exports.GetSignedArtifactURLResponse = exports.GetSignedArtifactURLRequest = exports.ListArtifactsResponse_MonolithArtifact = exports.ListArtifactsResponse = exports.ListArtifactsRequest = exports.FinalizeArtifactResponse = exports.FinalizeArtifactRequest = exports.CreateArtifactResponse = exports.CreateArtifactRequest = exports.FinalizeMigratedArtifactResponse = exports.FinalizeMigratedArtifactRequest = exports.MigrateArtifactResponse = exports.MigrateArtifactRequest = void 0;

+exports.ArtifactService = exports.DeleteArtifactResponse = exports.DeleteArtifactRequest = exports.GetSignedArtifactURLResponse = exports.GetSignedArtifactURLRequest = exports.ListArtifactsResponse_MonolithArtifact = exports.ListArtifactsResponse = exports.ListArtifactsRequest = exports.FinalizeArtifactResponse = exports.FinalizeArtifactRequest = exports.CreateArtifactResponse = exports.CreateArtifactRequest = void 0;

// @generated by protobuf-ts 2.9.1 with parameter long_type_string,client_none,generate_dependencies

// @generated from protobuf file "results/api/v1/artifact.proto" (package "github.actions.results.api.v1", syntax proto3)

// tslint:disable

@@ -838,236 +838,6 @@ const wrappers_1 = __nccwpck_require__(8626);

const wrappers_2 = __nccwpck_require__(8626);

const timestamp_1 = __nccwpck_require__(54622);

// @generated message type with reflection information, may provide speed optimized methods

-class MigrateArtifactRequest$Type extends runtime_5.MessageType {

- constructor() {

- super("github.actions.results.api.v1.MigrateArtifactRequest", [

- { no: 1, name: "workflow_run_backend_id", kind: "scalar", T: 9 /*ScalarType.STRING*/ },

- { no: 2, name: "name", kind: "scalar", T: 9 /*ScalarType.STRING*/ },

- { no: 3, name: "expires_at", kind: "message", T: () => timestamp_1.Timestamp }

- ]);

- }

- create(value) {

- const message = { workflowRunBackendId: "", name: "" };

- globalThis.Object.defineProperty(message, runtime_4.MESSAGE_TYPE, { enumerable: false, value: this });

- if (value !== undefined)

- (0, runtime_3.reflectionMergePartial)(this, message, value);

- return message;

- }

- internalBinaryRead(reader, length, options, target) {

- let message = target !== null && target !== void 0 ? target : this.create(), end = reader.pos + length;

- while (reader.pos < end) {

- let [fieldNo, wireType] = reader.tag();

- switch (fieldNo) {

- case /* string workflow_run_backend_id */ 1:

- message.workflowRunBackendId = reader.string();

- break;

- case /* string name */ 2:

- message.name = reader.string();

- break;

- case /* google.protobuf.Timestamp expires_at */ 3:

- message.expiresAt = timestamp_1.Timestamp.internalBinaryRead(reader, reader.uint32(), options, message.expiresAt);

- break;

- default:

- let u = options.readUnknownField;

- if (u === "throw")

- throw new globalThis.Error(`Unknown field ${fieldNo} (wire type ${wireType}) for ${this.typeName}`);

- let d = reader.skip(wireType);

- if (u !== false)

- (u === true ? runtime_2.UnknownFieldHandler.onRead : u)(this.typeName, message, fieldNo, wireType, d);

- }

- }

- return message;

- }

- internalBinaryWrite(message, writer, options) {

- /* string workflow_run_backend_id = 1; */

- if (message.workflowRunBackendId !== "")

- writer.tag(1, runtime_1.WireType.LengthDelimited).string(message.workflowRunBackendId);

- /* string name = 2; */

- if (message.name !== "")

- writer.tag(2, runtime_1.WireType.LengthDelimited).string(message.name);

- /* google.protobuf.Timestamp expires_at = 3; */

- if (message.expiresAt)

- timestamp_1.Timestamp.internalBinaryWrite(message.expiresAt, writer.tag(3, runtime_1.WireType.LengthDelimited).fork(), options).join();

- let u = options.writeUnknownFields;

- if (u !== false)

- (u == true ? runtime_2.UnknownFieldHandler.onWrite : u)(this.typeName, message, writer);

- return writer;

- }

-}

-/**

- * @generated MessageType for protobuf message github.actions.results.api.v1.MigrateArtifactRequest

- */

-exports.MigrateArtifactRequest = new MigrateArtifactRequest$Type();

-// @generated message type with reflection information, may provide speed optimized methods

-class MigrateArtifactResponse$Type extends runtime_5.MessageType {

- constructor() {

- super("github.actions.results.api.v1.MigrateArtifactResponse", [

- { no: 1, name: "ok", kind: "scalar", T: 8 /*ScalarType.BOOL*/ },

- { no: 2, name: "signed_upload_url", kind: "scalar", T: 9 /*ScalarType.STRING*/ }

- ]);

- }

- create(value) {

- const message = { ok: false, signedUploadUrl: "" };

- globalThis.Object.defineProperty(message, runtime_4.MESSAGE_TYPE, { enumerable: false, value: this });

- if (value !== undefined)

- (0, runtime_3.reflectionMergePartial)(this, message, value);

- return message;

- }

- internalBinaryRead(reader, length, options, target) {

- let message = target !== null && target !== void 0 ? target : this.create(), end = reader.pos + length;

- while (reader.pos < end) {

- let [fieldNo, wireType] = reader.tag();

- switch (fieldNo) {

- case /* bool ok */ 1:

- message.ok = reader.bool();

- break;

- case /* string signed_upload_url */ 2:

- message.signedUploadUrl = reader.string();

- break;

- default:

- let u = options.readUnknownField;

- if (u === "throw")

- throw new globalThis.Error(`Unknown field ${fieldNo} (wire type ${wireType}) for ${this.typeName}`);

- let d = reader.skip(wireType);

- if (u !== false)

- (u === true ? runtime_2.UnknownFieldHandler.onRead : u)(this.typeName, message, fieldNo, wireType, d);

- }

- }

- return message;

- }

- internalBinaryWrite(message, writer, options) {

- /* bool ok = 1; */

- if (message.ok !== false)

- writer.tag(1, runtime_1.WireType.Varint).bool(message.ok);

- /* string signed_upload_url = 2; */

- if (message.signedUploadUrl !== "")

- writer.tag(2, runtime_1.WireType.LengthDelimited).string(message.signedUploadUrl);

- let u = options.writeUnknownFields;

- if (u !== false)

- (u == true ? runtime_2.UnknownFieldHandler.onWrite : u)(this.typeName, message, writer);

- return writer;

- }

-}

-/**

- * @generated MessageType for protobuf message github.actions.results.api.v1.MigrateArtifactResponse

- */

-exports.MigrateArtifactResponse = new MigrateArtifactResponse$Type();

-// @generated message type with reflection information, may provide speed optimized methods

-class FinalizeMigratedArtifactRequest$Type extends runtime_5.MessageType {

- constructor() {

- super("github.actions.results.api.v1.FinalizeMigratedArtifactRequest", [

- { no: 1, name: "workflow_run_backend_id", kind: "scalar", T: 9 /*ScalarType.STRING*/ },

- { no: 2, name: "name", kind: "scalar", T: 9 /*ScalarType.STRING*/ },

- { no: 3, name: "size", kind: "scalar", T: 3 /*ScalarType.INT64*/ }

- ]);

- }

- create(value) {

- const message = { workflowRunBackendId: "", name: "", size: "0" };

- globalThis.Object.defineProperty(message, runtime_4.MESSAGE_TYPE, { enumerable: false, value: this });

- if (value !== undefined)

- (0, runtime_3.reflectionMergePartial)(this, message, value);

- return message;

- }

- internalBinaryRead(reader, length, options, target) {

- let message = target !== null && target !== void 0 ? target : this.create(), end = reader.pos + length;

- while (reader.pos < end) {

- let [fieldNo, wireType] = reader.tag();

- switch (fieldNo) {

- case /* string workflow_run_backend_id */ 1:

- message.workflowRunBackendId = reader.string();

- break;

- case /* string name */ 2:

- message.name = reader.string();

- break;

- case /* int64 size */ 3:

- message.size = reader.int64().toString();

- break;

- default:

- let u = options.readUnknownField;

- if (u === "throw")

- throw new globalThis.Error(`Unknown field ${fieldNo} (wire type ${wireType}) for ${this.typeName}`);

- let d = reader.skip(wireType);

- if (u !== false)

- (u === true ? runtime_2.UnknownFieldHandler.onRead : u)(this.typeName, message, fieldNo, wireType, d);

- }

- }

- return message;

- }

- internalBinaryWrite(message, writer, options) {

- /* string workflow_run_backend_id = 1; */

- if (message.workflowRunBackendId !== "")

- writer.tag(1, runtime_1.WireType.LengthDelimited).string(message.workflowRunBackendId);

- /* string name = 2; */

- if (message.name !== "")

- writer.tag(2, runtime_1.WireType.LengthDelimited).string(message.name);

- /* int64 size = 3; */

- if (message.size !== "0")

- writer.tag(3, runtime_1.WireType.Varint).int64(message.size);

- let u = options.writeUnknownFields;

- if (u !== false)

- (u == true ? runtime_2.UnknownFieldHandler.onWrite : u)(this.typeName, message, writer);

- return writer;

- }

-}

-/**

- * @generated MessageType for protobuf message github.actions.results.api.v1.FinalizeMigratedArtifactRequest

- */

-exports.FinalizeMigratedArtifactRequest = new FinalizeMigratedArtifactRequest$Type();

-// @generated message type with reflection information, may provide speed optimized methods

-class FinalizeMigratedArtifactResponse$Type extends runtime_5.MessageType {

- constructor() {

- super("github.actions.results.api.v1.FinalizeMigratedArtifactResponse", [

- { no: 1, name: "ok", kind: "scalar", T: 8 /*ScalarType.BOOL*/ },

- { no: 2, name: "artifact_id", kind: "scalar", T: 3 /*ScalarType.INT64*/ }

- ]);

- }

- create(value) {

- const message = { ok: false, artifactId: "0" };

- globalThis.Object.defineProperty(message, runtime_4.MESSAGE_TYPE, { enumerable: false, value: this });

- if (value !== undefined)

- (0, runtime_3.reflectionMergePartial)(this, message, value);

- return message;

- }

- internalBinaryRead(reader, length, options, target) {

- let message = target !== null && target !== void 0 ? target : this.create(), end = reader.pos + length;

- while (reader.pos < end) {

- let [fieldNo, wireType] = reader.tag();

- switch (fieldNo) {

- case /* bool ok */ 1:

- message.ok = reader.bool();

- break;

- case /* int64 artifact_id */ 2:

- message.artifactId = reader.int64().toString();

- break;

- default:

- let u = options.readUnknownField;

- if (u === "throw")

- throw new globalThis.Error(`Unknown field ${fieldNo} (wire type ${wireType}) for ${this.typeName}`);

- let d = reader.skip(wireType);

- if (u !== false)

- (u === true ? runtime_2.UnknownFieldHandler.onRead : u)(this.typeName, message, fieldNo, wireType, d);

- }

- }

- return message;

- }

- internalBinaryWrite(message, writer, options) {

- /* bool ok = 1; */

- if (message.ok !== false)

- writer.tag(1, runtime_1.WireType.Varint).bool(message.ok);

- /* int64 artifact_id = 2; */

- if (message.artifactId !== "0")

- writer.tag(2, runtime_1.WireType.Varint).int64(message.artifactId);

- let u = options.writeUnknownFields;

- if (u !== false)

- (u == true ? runtime_2.UnknownFieldHandler.onWrite : u)(this.typeName, message, writer);

- return writer;

- }

-}

-/**

- * @generated MessageType for protobuf message github.actions.results.api.v1.FinalizeMigratedArtifactResponse

- */

-exports.FinalizeMigratedArtifactResponse = new FinalizeMigratedArtifactResponse$Type();

-// @generated message type with reflection information, may provide speed optimized methods

class CreateArtifactRequest$Type extends runtime_5.MessageType {

constructor() {

super("github.actions.results.api.v1.CreateArtifactRequest", [

@@ -1449,8 +1219,7 @@ class ListArtifactsResponse_MonolithArtifact$Type extends runtime_5.MessageType

{ no: 3, name: "database_id", kind: "scalar", T: 3 /*ScalarType.INT64*/ },

{ no: 4, name: "name", kind: "scalar", T: 9 /*ScalarType.STRING*/ },

{ no: 5, name: "size", kind: "scalar", T: 3 /*ScalarType.INT64*/ },

- { no: 6, name: "created_at", kind: "message", T: () => timestamp_1.Timestamp },

- { no: 7, name: "digest", kind: "message", T: () => wrappers_2.StringValue }

+ { no: 6, name: "created_at", kind: "message", T: () => timestamp_1.Timestamp }

]);

}

create(value) {

@@ -1483,9 +1252,6 @@ class ListArtifactsResponse_MonolithArtifact$Type extends runtime_5.MessageType

case /* google.protobuf.Timestamp created_at */ 6:

message.createdAt = timestamp_1.Timestamp.internalBinaryRead(reader, reader.uint32(), options, message.createdAt);

break;

- case /* google.protobuf.StringValue digest */ 7:

- message.digest = wrappers_2.StringValue.internalBinaryRead(reader, reader.uint32(), options, message.digest);

- break;

default:

let u = options.readUnknownField;

if (u === "throw")

@@ -1516,9 +1282,6 @@ class ListArtifactsResponse_MonolithArtifact$Type extends runtime_5.MessageType

/* google.protobuf.Timestamp created_at = 6; */

if (message.createdAt)

timestamp_1.Timestamp.internalBinaryWrite(message.createdAt, writer.tag(6, runtime_1.WireType.LengthDelimited).fork(), options).join();

- /* google.protobuf.StringValue digest = 7; */

- if (message.digest)

- wrappers_2.StringValue.internalBinaryWrite(message.digest, writer.tag(7, runtime_1.WireType.LengthDelimited).fork(), options).join();

let u = options.writeUnknownFields;

if (u !== false)

(u == true ? runtime_2.UnknownFieldHandler.onWrite : u)(this.typeName, message, writer);

@@ -1760,9 +1523,7 @@ exports.ArtifactService = new runtime_rpc_1.ServiceType("github.actions.results.

{ name: "FinalizeArtifact", options: {}, I: exports.FinalizeArtifactRequest, O: exports.FinalizeArtifactResponse },

{ name: "ListArtifacts", options: {}, I: exports.ListArtifactsRequest, O: exports.ListArtifactsResponse },

{ name: "GetSignedArtifactURL", options: {}, I: exports.GetSignedArtifactURLRequest, O: exports.GetSignedArtifactURLResponse },

- { name: "DeleteArtifact", options: {}, I: exports.DeleteArtifactRequest, O: exports.DeleteArtifactResponse },

- { name: "MigrateArtifact", options: {}, I: exports.MigrateArtifactRequest, O: exports.MigrateArtifactResponse },

- { name: "FinalizeMigratedArtifact", options: {}, I: exports.FinalizeMigratedArtifactRequest, O: exports.FinalizeMigratedArtifactResponse }

+ { name: "DeleteArtifact", options: {}, I: exports.DeleteArtifactRequest, O: exports.DeleteArtifactResponse }

]);

//# sourceMappingURL=artifact.js.map

@@ -2159,8 +1920,6 @@ var __importDefault = (this && this.__importDefault) || function (mod) {

Object.defineProperty(exports, "__esModule", ({ value: true }));

exports.downloadArtifactInternal = exports.downloadArtifactPublic = exports.streamExtractExternal = void 0;

const promises_1 = __importDefault(__nccwpck_require__(73292));

-const crypto = __importStar(__nccwpck_require__(6113));

-const stream = __importStar(__nccwpck_require__(12781));

const github = __importStar(__nccwpck_require__(21260));

const core = __importStar(__nccwpck_require__(42186));

const httpClient = __importStar(__nccwpck_require__(96255));

@@ -2197,7 +1956,8 @@ function streamExtract(url, directory) {

let retryCount = 0;

while (retryCount < 5) {

try {

- return yield streamExtractExternal(url, directory);

+ yield streamExtractExternal(url, directory);

+ return;

}

catch (error) {

retryCount++;

@@ -2217,18 +1977,12 @@ function streamExtractExternal(url, directory) {

throw new Error(`Unexpected HTTP response from blob storage: ${response.message.statusCode} ${response.message.statusMessage}`);

}

const timeout = 30 * 1000; // 30 seconds

- let sha256Digest = undefined;

return new Promise((resolve, reject) => {

const timerFn = () => {

response.message.destroy(new Error(`Blob storage chunk did not respond in ${timeout}ms`));

};

const timer = setTimeout(timerFn, timeout);

- const hashStream = crypto.createHash('sha256').setEncoding('hex');

- const passThrough = new stream.PassThrough();

- response.message.pipe(passThrough);

- passThrough.pipe(hashStream);

- const extractStream = passThrough;

- extractStream

+ response.message

.on('data', () => {

timer.refresh();

})

@@ -2240,12 +1994,7 @@ function streamExtractExternal(url, directory) {

.pipe(unzip_stream_1.default.Extract({ path: directory }))

.on('close', () => {

clearTimeout(timer);

- if (hashStream) {

- hashStream.end();

- sha256Digest = hashStream.read();

- core.info(`SHA256 digest of downloaded artifact is ${sha256Digest}`);

- }

- resolve({ sha256Digest: `sha256:${sha256Digest}` });

+ resolve();

})

.on('error', (error) => {

reject(error);

@@ -2258,7 +2007,6 @@ function downloadArtifactPublic(artifactId, repositoryOwner, repositoryName, tok

return __awaiter(this, void 0, void 0, function* () {

const downloadPath = yield resolveOrCreateDirectory(options === null || options === void 0 ? void 0 : options.path);

const api = github.getOctokit(token);

- let digestMismatch = false;

core.info(`Downloading artifact '${artifactId}' from '${repositoryOwner}/${repositoryName}'`);

const { headers, status } = yield api.rest.actions.downloadArtifact({

owner: repositoryOwner,

@@ -2279,20 +2027,13 @@ function downloadArtifactPublic(artifactId, repositoryOwner, repositoryName, tok

core.info(`Redirecting to blob download url: ${scrubQueryParameters(location)}`);

try {

core.info(`Starting download of artifact to: ${downloadPath}`);

- const extractResponse = yield streamExtract(location, downloadPath);

+ yield streamExtract(location, downloadPath);

core.info(`Artifact download completed successfully.`);

- if (options === null || options === void 0 ? void 0 : options.expectedHash) {

- if ((options === null || options === void 0 ? void 0 : options.expectedHash) !== extractResponse.sha256Digest) {

- digestMismatch = true;

- core.debug(`Computed digest: ${extractResponse.sha256Digest}`);

- core.debug(`Expected digest: ${options.expectedHash}`);

- }

- }

}

catch (error) {

throw new Error(`Unable to download and extract artifact: ${error.message}`);

}

- return { downloadPath, digestMismatch };

+ return { downloadPath };

});

}

exports.downloadArtifactPublic = downloadArtifactPublic;

@@ -2300,7 +2041,6 @@ function downloadArtifactInternal(artifactId, options) {

return __awaiter(this, void 0, void 0, function* () {

const downloadPath = yield resolveOrCreateDirectory(options === null || options === void 0 ? void 0 : options.path);

const artifactClient = (0, artifact_twirp_client_1.internalArtifactTwirpClient)();

- let digestMismatch = false;

const { workflowRunBackendId, workflowJobRunBackendId } = (0, util_1.getBackendIdsFromToken)();

const listReq = {

workflowRunBackendId,

@@ -2323,20 +2063,13 @@ function downloadArtifactInternal(artifactId, options) {

core.info(`Redirecting to blob download url: ${scrubQueryParameters(signedUrl)}`);

try {

core.info(`Starting download of artifact to: ${downloadPath}`);

- const extractResponse = yield streamExtract(signedUrl, downloadPath);

+ yield streamExtract(signedUrl, downloadPath);

core.info(`Artifact download completed successfully.`);

- if (options === null || options === void 0 ? void 0 : options.expectedHash) {

- if ((options === null || options === void 0 ? void 0 : options.expectedHash) !== extractResponse.sha256Digest) {

- digestMismatch = true;

- core.debug(`Computed digest: ${extractResponse.sha256Digest}`);

- core.debug(`Expected digest: ${options.expectedHash}`);

- }

- }

}

catch (error) {

throw new Error(`Unable to download and extract artifact: ${error.message}`);

}

- return { downloadPath, digestMismatch };

+ return { downloadPath };

});

}

exports.downloadArtifactInternal = downloadArtifactInternal;

@@ -2442,17 +2175,13 @@ function getArtifactPublic(artifactName, workflowRunId, repositoryOwner, reposit

name: artifact.name,

id: artifact.id,

size: artifact.size_in_bytes,

- createdAt: artifact.created_at

- ? new Date(artifact.created_at)

- : undefined,

- digest: artifact.digest

+ createdAt: artifact.created_at ? new Date(artifact.created_at) : undefined

}

};

});

}

exports.getArtifactPublic = getArtifactPublic;

function getArtifactInternal(artifactName) {

- var _a;

return __awaiter(this, void 0, void 0, function* () {

const artifactClient = (0, artifact_twirp_client_1.internalArtifactTwirpClient)();

const { workflowRunBackendId, workflowJobRunBackendId } = (0, util_1.getBackendIdsFromToken)();

@@ -2479,8 +2208,7 @@ function getArtifactInternal(artifactName) {

size: Number(artifact.size),

createdAt: artifact.createdAt

? generated_1.Timestamp.toDate(artifact.createdAt)

- : undefined,

- digest: (_a = artifact.digest) === null || _a === void 0 ? void 0 : _a.value

+ : undefined

}

};

});

@@ -2534,7 +2262,7 @@ function listArtifactsPublic(workflowRunId, repositoryOwner, repositoryName, tok

};

const github = (0, github_1.getOctokit)(token, opts, plugin_retry_1.retry, plugin_request_log_1.requestLog);

let currentPageNumber = 1;

- const { data: listArtifactResponse } = yield github.request('GET /repos/{owner}/{repo}/actions/runs/{run_id}/artifacts', {

+ const { data: listArtifactResponse } = yield github.rest.actions.listWorkflowRunArtifacts({

owner: repositoryOwner,

repo: repositoryName,

run_id: workflowRunId,

@@ -2553,18 +2281,14 @@ function listArtifactsPublic(workflowRunId, repositoryOwner, repositoryName, tok

name: artifact.name,

id: artifact.id,

size: artifact.size_in_bytes,

- createdAt: artifact.created_at

- ? new Date(artifact.created_at)

- : undefined,

- digest: artifact.digest

+ createdAt: artifact.created_at ? new Date(artifact.created_at) : undefined

});

}

- // Move to the next page

- currentPageNumber++;

// Iterate over any remaining pages

for (currentPageNumber; currentPageNumber < numberOfPages; currentPageNumber++) {

+ currentPageNumber++;

(0, core_1.debug)(`Fetching page ${currentPageNumber} of artifact list`);

- const { data: listArtifactResponse } = yield github.request('GET /repos/{owner}/{repo}/actions/runs/{run_id}/artifacts', {

+ const { data: listArtifactResponse } = yield github.rest.actions.listWorkflowRunArtifacts({

owner: repositoryOwner,

repo: repositoryName,

run_id: workflowRunId,

@@ -2578,8 +2302,7 @@ function listArtifactsPublic(workflowRunId, repositoryOwner, repositoryName, tok

size: artifact.size_in_bytes,

createdAt: artifact.created_at

? new Date(artifact.created_at)

- : undefined,

- digest: artifact.digest

+ : undefined

});

}

}

@@ -2602,18 +2325,14 @@ function listArtifactsInternal(latest = false) {

workflowJobRunBackendId

};

const res = yield artifactClient.ListArtifacts(req);

- let artifacts = res.artifacts.map(artifact => {

- var _a;

- return ({

- name: artifact.name,

- id: Number(artifact.databaseId),

- size: Number(artifact.size),

- createdAt: artifact.createdAt

- ? generated_1.Timestamp.toDate(artifact.createdAt)

- : undefined,

- digest: (_a = artifact.digest) === null || _a === void 0 ? void 0 : _a.value

- });

- });

+ let artifacts = res.artifacts.map(artifact => ({

+ name: artifact.name,

+ id: Number(artifact.databaseId),

+ size: Number(artifact.size),

+ createdAt: artifact.createdAt

+ ? generated_1.Timestamp.toDate(artifact.createdAt)

+ : undefined

+ }));

if (latest) {

artifacts = filterLatest(artifacts);

}

@@ -2725,7 +2444,6 @@ const generated_1 = __nccwpck_require__(49960);

const config_1 = __nccwpck_require__(74610);

const user_agent_1 = __nccwpck_require__(85164);

const errors_1 = __nccwpck_require__(38182);

-const util_1 = __nccwpck_require__(63062);

class ArtifactHttpClient {

constructor(userAgent, maxAttempts, baseRetryIntervalMilliseconds, retryMultiplier) {

this.maxAttempts = 5;

@@ -2778,7 +2496,6 @@ class ArtifactHttpClient {

(0, core_1.debug)(`[Response] - ${response.message.statusCode}`);

(0, core_1.debug)(`Headers: ${JSON.stringify(response.message.headers, null, 2)}`);

const body = JSON.parse(rawBody);

- (0, util_1.maskSecretUrls)(body);

(0, core_1.debug)(`Body: ${JSON.stringify(body, null, 2)}`);

if (this.isSuccessStatusCode(statusCode)) {

return { response, body };

@@ -3095,11 +2812,10 @@ var __importDefault = (this && this.__importDefault) || function (mod) {

return (mod && mod.__esModule) ? mod : { "default": mod };

};

Object.defineProperty(exports, "__esModule", ({ value: true }));

-exports.maskSecretUrls = exports.maskSigUrl = exports.getBackendIdsFromToken = void 0;

+exports.getBackendIdsFromToken = void 0;

const core = __importStar(__nccwpck_require__(42186));

const config_1 = __nccwpck_require__(74610);

const jwt_decode_1 = __importDefault(__nccwpck_require__(84329));

-const core_1 = __nccwpck_require__(42186);

const InvalidJwtError = new Error('Failed to get backend IDs: The provided JWT token is invalid and/or missing claims');

// uses the JWT token claims to get the

// workflow run and workflow job run backend ids

@@ -3148,74 +2864,6 @@ function getBackendIdsFromToken() {

throw InvalidJwtError;

}

exports.getBackendIdsFromToken = getBackendIdsFromToken;

-/**

- * Masks the `sig` parameter in a URL and sets it as a secret.

- *

- * @param url - The URL containing the signature parameter to mask

- * @remarks

- * This function attempts to parse the provided URL and identify the 'sig' query parameter.

- * If found, it registers both the raw and URL-encoded signature values as secrets using

- * the Actions `setSecret` API, which prevents them from being displayed in logs.

- *

- * The function handles errors gracefully if URL parsing fails, logging them as debug messages.

- *

- * @example

- * ```typescript

- * // Mask a signature in an Azure SAS token URL

- * maskSigUrl('https://example.blob.core.windows.net/container/file.txt?sig=abc123&se=2023-01-01');

- * ```

- */

-function maskSigUrl(url) {

- if (!url)

- return;

- try {

- const parsedUrl = new URL(url);

- const signature = parsedUrl.searchParams.get('sig');

- if (signature) {

- (0, core_1.setSecret)(signature);

- (0, core_1.setSecret)(encodeURIComponent(signature));

- }

- }

- catch (error) {

- (0, core_1.debug)(`Failed to parse URL: ${url} ${error instanceof Error ? error.message : String(error)}`);

- }

-}

-exports.maskSigUrl = maskSigUrl;

-/**

- * Masks sensitive information in URLs containing signature parameters.

- * Currently supports masking 'sig' parameters in the 'signed_upload_url'

- * and 'signed_download_url' properties of the provided object.

- *

- * @param body - The object should contain a signature

- * @remarks

- * This function extracts URLs from the object properties and calls maskSigUrl

- * on each one to redact sensitive signature information. The function doesn't

- * modify the original object; it only marks the signatures as secrets for

- * logging purposes.

- *

- * @example

- * ```typescript

- * const responseBody = {

- * signed_upload_url: 'https://example.com?sig=abc123',

- * signed_download_url: 'https://example.com?sig=def456'

- * };

- * maskSecretUrls(responseBody);

- * ```

- */

-function maskSecretUrls(body) {

- if (typeof body !== 'object' || body === null) {

- (0, core_1.debug)('body is not an object or is null');

- return;

- }

- if ('signed_upload_url' in body &&

- typeof body.signed_upload_url === 'string') {

- maskSigUrl(body.signed_upload_url);

- }

- if ('signed_url' in body && typeof body.signed_url === 'string') {

- maskSigUrl(body.signed_url);

- }

-}

-exports.maskSecretUrls = maskSecretUrls;

//# sourceMappingURL=util.js.map

/***/ }),

@@ -3322,7 +2970,7 @@ function uploadZipToBlobStorage(authenticatedUploadURL, zipUploadStream) {

core.info('Finished uploading artifact content to blob storage!');

hashStream.end();

sha256Hash = hashStream.read();

- core.info(`SHA256 digest of uploaded artifact zip is ${sha256Hash}`);

+ core.info(`SHA256 hash of uploaded artifact zip is ${sha256Hash}`);

if (uploadByteCount === 0) {

core.warning(`No data was uploaded to blob storage. Reported upload byte count is 0.`);

}

@@ -135835,7 +135483,7 @@ module.exports = index;

/***/ ((module) => {

"use strict";

-module.exports = JSON.parse('{"name":"@actions/artifact","version":"2.3.2","preview":true,"description":"Actions artifact lib","keywords":["github","actions","artifact"],"homepage":"https://github.com/actions/toolkit/tree/main/packages/artifact","license":"MIT","main":"lib/artifact.js","types":"lib/artifact.d.ts","directories":{"lib":"lib","test":"__tests__"},"files":["lib","!.DS_Store"],"publishConfig":{"access":"public"},"repository":{"type":"git","url":"git+https://github.com/actions/toolkit.git","directory":"packages/artifact"},"scripts":{"audit-moderate":"npm install && npm audit --json --audit-level=moderate > audit.json","test":"cd ../../ && npm run test ./packages/artifact","bootstrap":"cd ../../ && npm run bootstrap","tsc-run":"tsc","tsc":"npm run bootstrap && npm run tsc-run","gen:docs":"typedoc --plugin typedoc-plugin-markdown --out docs/generated src/artifact.ts --githubPages false --readme none"},"bugs":{"url":"https://github.com/actions/toolkit/issues"},"dependencies":{"@actions/core":"^1.10.0","@actions/github":"^5.1.1","@actions/http-client":"^2.1.0","@azure/storage-blob":"^12.15.0","@octokit/core":"^3.5.1","@octokit/plugin-request-log":"^1.0.4","@octokit/plugin-retry":"^3.0.9","@octokit/request-error":"^5.0.0","@protobuf-ts/plugin":"^2.2.3-alpha.1","archiver":"^7.0.1","jwt-decode":"^3.1.2","unzip-stream":"^0.3.1"},"devDependencies":{"@types/archiver":"^5.3.2","@types/unzip-stream":"^0.3.4","typedoc":"^0.25.4","typedoc-plugin-markdown":"^3.17.1","typescript":"^5.2.2"}}');

+module.exports = JSON.parse('{"name":"@actions/artifact","version":"2.2.2","preview":true,"description":"Actions artifact lib","keywords":["github","actions","artifact"],"homepage":"https://github.com/actions/toolkit/tree/main/packages/artifact","license":"MIT","main":"lib/artifact.js","types":"lib/artifact.d.ts","directories":{"lib":"lib","test":"__tests__"},"files":["lib","!.DS_Store"],"publishConfig":{"access":"public"},"repository":{"type":"git","url":"git+https://github.com/actions/toolkit.git","directory":"packages/artifact"},"scripts":{"audit-moderate":"npm install && npm audit --json --audit-level=moderate > audit.json","test":"cd ../../ && npm run test ./packages/artifact","bootstrap":"cd ../../ && npm run bootstrap","tsc-run":"tsc","tsc":"npm run bootstrap && npm run tsc-run","gen:docs":"typedoc --plugin typedoc-plugin-markdown --out docs/generated src/artifact.ts --githubPages false --readme none"},"bugs":{"url":"https://github.com/actions/toolkit/issues"},"dependencies":{"@actions/core":"^1.10.0","@actions/github":"^5.1.1","@actions/http-client":"^2.1.0","@azure/storage-blob":"^12.15.0","@octokit/core":"^3.5.1","@octokit/plugin-request-log":"^1.0.4","@octokit/plugin-retry":"^3.0.9","@octokit/request-error":"^5.0.0","@protobuf-ts/plugin":"^2.2.3-alpha.1","archiver":"^7.0.1","jwt-decode":"^3.1.2","unzip-stream":"^0.3.1"},"devDependencies":{"@types/archiver":"^5.3.2","@types/unzip-stream":"^0.3.4","typedoc":"^0.25.4","typedoc-plugin-markdown":"^3.17.1","typescript":"^5.2.2"}}');

/***/ }),

diff --git a/dist/upload/index.js b/dist/upload/index.js

index 89238fa..3966dc5 100644

--- a/dist/upload/index.js

+++ b/dist/upload/index.js

@@ -824,7 +824,7 @@ __exportStar(__nccwpck_require__(49773), exports);

"use strict";

Object.defineProperty(exports, "__esModule", ({ value: true }));

-exports.ArtifactService = exports.DeleteArtifactResponse = exports.DeleteArtifactRequest = exports.GetSignedArtifactURLResponse = exports.GetSignedArtifactURLRequest = exports.ListArtifactsResponse_MonolithArtifact = exports.ListArtifactsResponse = exports.ListArtifactsRequest = exports.FinalizeArtifactResponse = exports.FinalizeArtifactRequest = exports.CreateArtifactResponse = exports.CreateArtifactRequest = exports.FinalizeMigratedArtifactResponse = exports.FinalizeMigratedArtifactRequest = exports.MigrateArtifactResponse = exports.MigrateArtifactRequest = void 0;

+exports.ArtifactService = exports.DeleteArtifactResponse = exports.DeleteArtifactRequest = exports.GetSignedArtifactURLResponse = exports.GetSignedArtifactURLRequest = exports.ListArtifactsResponse_MonolithArtifact = exports.ListArtifactsResponse = exports.ListArtifactsRequest = exports.FinalizeArtifactResponse = exports.FinalizeArtifactRequest = exports.CreateArtifactResponse = exports.CreateArtifactRequest = void 0;

// @generated by protobuf-ts 2.9.1 with parameter long_type_string,client_none,generate_dependencies

// @generated from protobuf file "results/api/v1/artifact.proto" (package "github.actions.results.api.v1", syntax proto3)

// tslint:disable

@@ -838,236 +838,6 @@ const wrappers_1 = __nccwpck_require__(8626);

const wrappers_2 = __nccwpck_require__(8626);

const timestamp_1 = __nccwpck_require__(54622);

// @generated message type with reflection information, may provide speed optimized methods

-class MigrateArtifactRequest$Type extends runtime_5.MessageType {

- constructor() {

- super("github.actions.results.api.v1.MigrateArtifactRequest", [

- { no: 1, name: "workflow_run_backend_id", kind: "scalar", T: 9 /*ScalarType.STRING*/ },

- { no: 2, name: "name", kind: "scalar", T: 9 /*ScalarType.STRING*/ },

- { no: 3, name: "expires_at", kind: "message", T: () => timestamp_1.Timestamp }

- ]);

- }

- create(value) {

- const message = { workflowRunBackendId: "", name: "" };

- globalThis.Object.defineProperty(message, runtime_4.MESSAGE_TYPE, { enumerable: false, value: this });

- if (value !== undefined)

- (0, runtime_3.reflectionMergePartial)(this, message, value);

- return message;

- }

- internalBinaryRead(reader, length, options, target) {

- let message = target !== null && target !== void 0 ? target : this.create(), end = reader.pos + length;

- while (reader.pos < end) {

- let [fieldNo, wireType] = reader.tag();

- switch (fieldNo) {

- case /* string workflow_run_backend_id */ 1:

- message.workflowRunBackendId = reader.string();

- break;

- case /* string name */ 2:

- message.name = reader.string();

- break;

- case /* google.protobuf.Timestamp expires_at */ 3:

- message.expiresAt = timestamp_1.Timestamp.internalBinaryRead(reader, reader.uint32(), options, message.expiresAt);

- break;

- default:

- let u = options.readUnknownField;

- if (u === "throw")

- throw new globalThis.Error(`Unknown field ${fieldNo} (wire type ${wireType}) for ${this.typeName}`);

- let d = reader.skip(wireType);

- if (u !== false)

- (u === true ? runtime_2.UnknownFieldHandler.onRead : u)(this.typeName, message, fieldNo, wireType, d);

- }

- }

- return message;

- }

- internalBinaryWrite(message, writer, options) {

- /* string workflow_run_backend_id = 1; */

- if (message.workflowRunBackendId !== "")

- writer.tag(1, runtime_1.WireType.LengthDelimited).string(message.workflowRunBackendId);

- /* string name = 2; */

- if (message.name !== "")

- writer.tag(2, runtime_1.WireType.LengthDelimited).string(message.name);

- /* google.protobuf.Timestamp expires_at = 3; */

- if (message.expiresAt)

- timestamp_1.Timestamp.internalBinaryWrite(message.expiresAt, writer.tag(3, runtime_1.WireType.LengthDelimited).fork(), options).join();

- let u = options.writeUnknownFields;

- if (u !== false)

- (u == true ? runtime_2.UnknownFieldHandler.onWrite : u)(this.typeName, message, writer);

- return writer;

- }

-}

-/**

- * @generated MessageType for protobuf message github.actions.results.api.v1.MigrateArtifactRequest

- */

-exports.MigrateArtifactRequest = new MigrateArtifactRequest$Type();

-// @generated message type with reflection information, may provide speed optimized methods

-class MigrateArtifactResponse$Type extends runtime_5.MessageType {

- constructor() {

- super("github.actions.results.api.v1.MigrateArtifactResponse", [

- { no: 1, name: "ok", kind: "scalar", T: 8 /*ScalarType.BOOL*/ },

- { no: 2, name: "signed_upload_url", kind: "scalar", T: 9 /*ScalarType.STRING*/ }

- ]);

- }

- create(value) {

- const message = { ok: false, signedUploadUrl: "" };

- globalThis.Object.defineProperty(message, runtime_4.MESSAGE_TYPE, { enumerable: false, value: this });

- if (value !== undefined)

- (0, runtime_3.reflectionMergePartial)(this, message, value);

- return message;

- }

- internalBinaryRead(reader, length, options, target) {

- let message = target !== null && target !== void 0 ? target : this.create(), end = reader.pos + length;

- while (reader.pos < end) {

- let [fieldNo, wireType] = reader.tag();

- switch (fieldNo) {

- case /* bool ok */ 1:

- message.ok = reader.bool();

- break;

- case /* string signed_upload_url */ 2:

- message.signedUploadUrl = reader.string();

- break;

- default:

- let u = options.readUnknownField;

- if (u === "throw")

- throw new globalThis.Error(`Unknown field ${fieldNo} (wire type ${wireType}) for ${this.typeName}`);

- let d = reader.skip(wireType);

- if (u !== false)

- (u === true ? runtime_2.UnknownFieldHandler.onRead : u)(this.typeName, message, fieldNo, wireType, d);

- }

- }

- return message;

- }

- internalBinaryWrite(message, writer, options) {

- /* bool ok = 1; */

- if (message.ok !== false)

- writer.tag(1, runtime_1.WireType.Varint).bool(message.ok);

- /* string signed_upload_url = 2; */

- if (message.signedUploadUrl !== "")

- writer.tag(2, runtime_1.WireType.LengthDelimited).string(message.signedUploadUrl);

- let u = options.writeUnknownFields;

- if (u !== false)

- (u == true ? runtime_2.UnknownFieldHandler.onWrite : u)(this.typeName, message, writer);

- return writer;

- }

-}

-/**

- * @generated MessageType for protobuf message github.actions.results.api.v1.MigrateArtifactResponse

- */

-exports.MigrateArtifactResponse = new MigrateArtifactResponse$Type();

-// @generated message type with reflection information, may provide speed optimized methods

-class FinalizeMigratedArtifactRequest$Type extends runtime_5.MessageType {

- constructor() {

- super("github.actions.results.api.v1.FinalizeMigratedArtifactRequest", [

- { no: 1, name: "workflow_run_backend_id", kind: "scalar", T: 9 /*ScalarType.STRING*/ },

- { no: 2, name: "name", kind: "scalar", T: 9 /*ScalarType.STRING*/ },

- { no: 3, name: "size", kind: "scalar", T: 3 /*ScalarType.INT64*/ }

- ]);

- }

- create(value) {

- const message = { workflowRunBackendId: "", name: "", size: "0" };

- globalThis.Object.defineProperty(message, runtime_4.MESSAGE_TYPE, { enumerable: false, value: this });

- if (value !== undefined)

- (0, runtime_3.reflectionMergePartial)(this, message, value);

- return message;

- }

- internalBinaryRead(reader, length, options, target) {

- let message = target !== null && target !== void 0 ? target : this.create(), end = reader.pos + length;

- while (reader.pos < end) {

- let [fieldNo, wireType] = reader.tag();

- switch (fieldNo) {

- case /* string workflow_run_backend_id */ 1:

- message.workflowRunBackendId = reader.string();

- break;

- case /* string name */ 2:

- message.name = reader.string();

- break;

- case /* int64 size */ 3:

- message.size = reader.int64().toString();

- break;

- default:

- let u = options.readUnknownField;

- if (u === "throw")

- throw new globalThis.Error(`Unknown field ${fieldNo} (wire type ${wireType}) for ${this.typeName}`);

- let d = reader.skip(wireType);

- if (u !== false)

- (u === true ? runtime_2.UnknownFieldHandler.onRead : u)(this.typeName, message, fieldNo, wireType, d);

- }

- }

- return message;

- }

- internalBinaryWrite(message, writer, options) {

- /* string workflow_run_backend_id = 1; */

- if (message.workflowRunBackendId !== "")

- writer.tag(1, runtime_1.WireType.LengthDelimited).string(message.workflowRunBackendId);

- /* string name = 2; */

- if (message.name !== "")

- writer.tag(2, runtime_1.WireType.LengthDelimited).string(message.name);

- /* int64 size = 3; */

- if (message.size !== "0")

- writer.tag(3, runtime_1.WireType.Varint).int64(message.size);

- let u = options.writeUnknownFields;

- if (u !== false)

- (u == true ? runtime_2.UnknownFieldHandler.onWrite : u)(this.typeName, message, writer);

- return writer;

- }

-}

-/**

- * @generated MessageType for protobuf message github.actions.results.api.v1.FinalizeMigratedArtifactRequest

- */

-exports.FinalizeMigratedArtifactRequest = new FinalizeMigratedArtifactRequest$Type();

-// @generated message type with reflection information, may provide speed optimized methods

-class FinalizeMigratedArtifactResponse$Type extends runtime_5.MessageType {

- constructor() {

- super("github.actions.results.api.v1.FinalizeMigratedArtifactResponse", [

- { no: 1, name: "ok", kind: "scalar", T: 8 /*ScalarType.BOOL*/ },

- { no: 2, name: "artifact_id", kind: "scalar", T: 3 /*ScalarType.INT64*/ }

- ]);

- }

- create(value) {

- const message = { ok: false, artifactId: "0" };

- globalThis.Object.defineProperty(message, runtime_4.MESSAGE_TYPE, { enumerable: false, value: this });

- if (value !== undefined)

- (0, runtime_3.reflectionMergePartial)(this, message, value);

- return message;

- }

- internalBinaryRead(reader, length, options, target) {

- let message = target !== null && target !== void 0 ? target : this.create(), end = reader.pos + length;

- while (reader.pos < end) {

- let [fieldNo, wireType] = reader.tag();

- switch (fieldNo) {

- case /* bool ok */ 1:

- message.ok = reader.bool();

- break;

- case /* int64 artifact_id */ 2:

- message.artifactId = reader.int64().toString();

- break;

- default:

- let u = options.readUnknownField;

- if (u === "throw")

- throw new globalThis.Error(`Unknown field ${fieldNo} (wire type ${wireType}) for ${this.typeName}`);

- let d = reader.skip(wireType);

- if (u !== false)

- (u === true ? runtime_2.UnknownFieldHandler.onRead : u)(this.typeName, message, fieldNo, wireType, d);

- }

- }

- return message;

- }

- internalBinaryWrite(message, writer, options) {

- /* bool ok = 1; */

- if (message.ok !== false)

- writer.tag(1, runtime_1.WireType.Varint).bool(message.ok);

- /* int64 artifact_id = 2; */

- if (message.artifactId !== "0")

- writer.tag(2, runtime_1.WireType.Varint).int64(message.artifactId);

- let u = options.writeUnknownFields;

- if (u !== false)

- (u == true ? runtime_2.UnknownFieldHandler.onWrite : u)(this.typeName, message, writer);

- return writer;

- }

-}

-/**

- * @generated MessageType for protobuf message github.actions.results.api.v1.FinalizeMigratedArtifactResponse

- */

-exports.FinalizeMigratedArtifactResponse = new FinalizeMigratedArtifactResponse$Type();

-// @generated message type with reflection information, may provide speed optimized methods

class CreateArtifactRequest$Type extends runtime_5.MessageType {

constructor() {

super("github.actions.results.api.v1.CreateArtifactRequest", [

@@ -1449,8 +1219,7 @@ class ListArtifactsResponse_MonolithArtifact$Type extends runtime_5.MessageType

{ no: 3, name: "database_id", kind: "scalar", T: 3 /*ScalarType.INT64*/ },

{ no: 4, name: "name", kind: "scalar", T: 9 /*ScalarType.STRING*/ },

{ no: 5, name: "size", kind: "scalar", T: 3 /*ScalarType.INT64*/ },

- { no: 6, name: "created_at", kind: "message", T: () => timestamp_1.Timestamp },

- { no: 7, name: "digest", kind: "message", T: () => wrappers_2.StringValue }

+ { no: 6, name: "created_at", kind: "message", T: () => timestamp_1.Timestamp }

]);

}

create(value) {

@@ -1483,9 +1252,6 @@ class ListArtifactsResponse_MonolithArtifact$Type extends runtime_5.MessageType

case /* google.protobuf.Timestamp created_at */ 6:

message.createdAt = timestamp_1.Timestamp.internalBinaryRead(reader, reader.uint32(), options, message.createdAt);

break;

- case /* google.protobuf.StringValue digest */ 7:

- message.digest = wrappers_2.StringValue.internalBinaryRead(reader, reader.uint32(), options, message.digest);

- break;

default:

let u = options.readUnknownField;

if (u === "throw")

@@ -1516,9 +1282,6 @@ class ListArtifactsResponse_MonolithArtifact$Type extends runtime_5.MessageType

/* google.protobuf.Timestamp created_at = 6; */

if (message.createdAt)

timestamp_1.Timestamp.internalBinaryWrite(message.createdAt, writer.tag(6, runtime_1.WireType.LengthDelimited).fork(), options).join();

- /* google.protobuf.StringValue digest = 7; */

- if (message.digest)

- wrappers_2.StringValue.internalBinaryWrite(message.digest, writer.tag(7, runtime_1.WireType.LengthDelimited).fork(), options).join();

let u = options.writeUnknownFields;

if (u !== false)

(u == true ? runtime_2.UnknownFieldHandler.onWrite : u)(this.typeName, message, writer);

@@ -1760,9 +1523,7 @@ exports.ArtifactService = new runtime_rpc_1.ServiceType("github.actions.results.

{ name: "FinalizeArtifact", options: {}, I: exports.FinalizeArtifactRequest, O: exports.FinalizeArtifactResponse },

{ name: "ListArtifacts", options: {}, I: exports.ListArtifactsRequest, O: exports.ListArtifactsResponse },

{ name: "GetSignedArtifactURL", options: {}, I: exports.GetSignedArtifactURLRequest, O: exports.GetSignedArtifactURLResponse },

- { name: "DeleteArtifact", options: {}, I: exports.DeleteArtifactRequest, O: exports.DeleteArtifactResponse },

- { name: "MigrateArtifact", options: {}, I: exports.MigrateArtifactRequest, O: exports.MigrateArtifactResponse },

- { name: "FinalizeMigratedArtifact", options: {}, I: exports.FinalizeMigratedArtifactRequest, O: exports.FinalizeMigratedArtifactResponse }

+ { name: "DeleteArtifact", options: {}, I: exports.DeleteArtifactRequest, O: exports.DeleteArtifactResponse }

]);

//# sourceMappingURL=artifact.js.map

@@ -2159,8 +1920,6 @@ var __importDefault = (this && this.__importDefault) || function (mod) {

Object.defineProperty(exports, "__esModule", ({ value: true }));

exports.downloadArtifactInternal = exports.downloadArtifactPublic = exports.streamExtractExternal = void 0;

const promises_1 = __importDefault(__nccwpck_require__(73292));

-const crypto = __importStar(__nccwpck_require__(6113));

-const stream = __importStar(__nccwpck_require__(12781));

const github = __importStar(__nccwpck_require__(21260));

const core = __importStar(__nccwpck_require__(42186));

const httpClient = __importStar(__nccwpck_require__(96255));

@@ -2197,7 +1956,8 @@ function streamExtract(url, directory) {

let retryCount = 0;

while (retryCount < 5) {

try {

- return yield streamExtractExternal(url, directory);

+ yield streamExtractExternal(url, directory);

+ return;

}

catch (error) {

retryCount++;

@@ -2217,18 +1977,12 @@ function streamExtractExternal(url, directory) {

throw new Error(`Unexpected HTTP response from blob storage: ${response.message.statusCode} ${response.message.statusMessage}`);

}

const timeout = 30 * 1000; // 30 seconds

- let sha256Digest = undefined;

return new Promise((resolve, reject) => {

const timerFn = () => {

response.message.destroy(new Error(`Blob storage chunk did not respond in ${timeout}ms`));

};

const timer = setTimeout(timerFn, timeout);

- const hashStream = crypto.createHash('sha256').setEncoding('hex');

- const passThrough = new stream.PassThrough();

- response.message.pipe(passThrough);

- passThrough.pipe(hashStream);

- const extractStream = passThrough;

- extractStream

+ response.message

.on('data', () => {

timer.refresh();

})

@@ -2240,12 +1994,7 @@ function streamExtractExternal(url, directory) {

.pipe(unzip_stream_1.default.Extract({ path: directory }))

.on('close', () => {

clearTimeout(timer);

- if (hashStream) {

- hashStream.end();

- sha256Digest = hashStream.read();

- core.info(`SHA256 digest of downloaded artifact is ${sha256Digest}`);

- }

- resolve({ sha256Digest: `sha256:${sha256Digest}` });

+ resolve();

})

.on('error', (error) => {

reject(error);

@@ -2258,7 +2007,6 @@ function downloadArtifactPublic(artifactId, repositoryOwner, repositoryName, tok

return __awaiter(this, void 0, void 0, function* () {

const downloadPath = yield resolveOrCreateDirectory(options === null || options === void 0 ? void 0 : options.path);

const api = github.getOctokit(token);

- let digestMismatch = false;

core.info(`Downloading artifact '${artifactId}' from '${repositoryOwner}/${repositoryName}'`);

const { headers, status } = yield api.rest.actions.downloadArtifact({

owner: repositoryOwner,

@@ -2279,20 +2027,13 @@ function downloadArtifactPublic(artifactId, repositoryOwner, repositoryName, tok

core.info(`Redirecting to blob download url: ${scrubQueryParameters(location)}`);

try {

core.info(`Starting download of artifact to: ${downloadPath}`);

- const extractResponse = yield streamExtract(location, downloadPath);

+ yield streamExtract(location, downloadPath);

core.info(`Artifact download completed successfully.`);

- if (options === null || options === void 0 ? void 0 : options.expectedHash) {

- if ((options === null || options === void 0 ? void 0 : options.expectedHash) !== extractResponse.sha256Digest) {

- digestMismatch = true;

- core.debug(`Computed digest: ${extractResponse.sha256Digest}`);

- core.debug(`Expected digest: ${options.expectedHash}`);

- }

- }

}

catch (error) {

throw new Error(`Unable to download and extract artifact: ${error.message}`);

}

- return { downloadPath, digestMismatch };

+ return { downloadPath };

});

}

exports.downloadArtifactPublic = downloadArtifactPublic;

@@ -2300,7 +2041,6 @@ function downloadArtifactInternal(artifactId, options) {

return __awaiter(this, void 0, void 0, function* () {

const downloadPath = yield resolveOrCreateDirectory(options === null || options === void 0 ? void 0 : options.path);

const artifactClient = (0, artifact_twirp_client_1.internalArtifactTwirpClient)();

- let digestMismatch = false;

const { workflowRunBackendId, workflowJobRunBackendId } = (0, util_1.getBackendIdsFromToken)();

const listReq = {

workflowRunBackendId,

@@ -2323,20 +2063,13 @@ function downloadArtifactInternal(artifactId, options) {

core.info(`Redirecting to blob download url: ${scrubQueryParameters(signedUrl)}`);

try {

core.info(`Starting download of artifact to: ${downloadPath}`);

- const extractResponse = yield streamExtract(signedUrl, downloadPath);

+ yield streamExtract(signedUrl, downloadPath);

core.info(`Artifact download completed successfully.`);

- if (options === null || options === void 0 ? void 0 : options.expectedHash) {

- if ((options === null || options === void 0 ? void 0 : options.expectedHash) !== extractResponse.sha256Digest) {

- digestMismatch = true;

- core.debug(`Computed digest: ${extractResponse.sha256Digest}`);

- core.debug(`Expected digest: ${options.expectedHash}`);

- }

- }

}

catch (error) {

throw new Error(`Unable to download and extract artifact: ${error.message}`);

}

- return { downloadPath, digestMismatch };

+ return { downloadPath };

});

}

exports.downloadArtifactInternal = downloadArtifactInternal;

@@ -2442,17 +2175,13 @@ function getArtifactPublic(artifactName, workflowRunId, repositoryOwner, reposit

name: artifact.name,

id: artifact.id,

size: artifact.size_in_bytes,

- createdAt: artifact.created_at

- ? new Date(artifact.created_at)

- : undefined,

- digest: artifact.digest

+ createdAt: artifact.created_at ? new Date(artifact.created_at) : undefined

}

};

});

}

exports.getArtifactPublic = getArtifactPublic;

function getArtifactInternal(artifactName) {

- var _a;

return __awaiter(this, void 0, void 0, function* () {

const artifactClient = (0, artifact_twirp_client_1.internalArtifactTwirpClient)();

const { workflowRunBackendId, workflowJobRunBackendId } = (0, util_1.getBackendIdsFromToken)();

@@ -2479,8 +2208,7 @@ function getArtifactInternal(artifactName) {

size: Number(artifact.size),

createdAt: artifact.createdAt

? generated_1.Timestamp.toDate(artifact.createdAt)

- : undefined,

- digest: (_a = artifact.digest) === null || _a === void 0 ? void 0 : _a.value

+ : undefined

}

};

});

@@ -2534,7 +2262,7 @@ function listArtifactsPublic(workflowRunId, repositoryOwner, repositoryName, tok

};

const github = (0, github_1.getOctokit)(token, opts, plugin_retry_1.retry, plugin_request_log_1.requestLog);

let currentPageNumber = 1;

- const { data: listArtifactResponse } = yield github.request('GET /repos/{owner}/{repo}/actions/runs/{run_id}/artifacts', {

+ const { data: listArtifactResponse } = yield github.rest.actions.listWorkflowRunArtifacts({

owner: repositoryOwner,

repo: repositoryName,

run_id: workflowRunId,

@@ -2553,18 +2281,14 @@ function listArtifactsPublic(workflowRunId, repositoryOwner, repositoryName, tok

name: artifact.name,

id: artifact.id,

size: artifact.size_in_bytes,

- createdAt: artifact.created_at

- ? new Date(artifact.created_at)

- : undefined,

- digest: artifact.digest

+ createdAt: artifact.created_at ? new Date(artifact.created_at) : undefined

});

}

- // Move to the next page

- currentPageNumber++;

// Iterate over any remaining pages

for (currentPageNumber; currentPageNumber < numberOfPages; currentPageNumber++) {

+ currentPageNumber++;

(0, core_1.debug)(`Fetching page ${currentPageNumber} of artifact list`);

- const { data: listArtifactResponse } = yield github.request('GET /repos/{owner}/{repo}/actions/runs/{run_id}/artifacts', {

+ const { data: listArtifactResponse } = yield github.rest.actions.listWorkflowRunArtifacts({

owner: repositoryOwner,

repo: repositoryName,

run_id: workflowRunId,

@@ -2578,8 +2302,7 @@ function listArtifactsPublic(workflowRunId, repositoryOwner, repositoryName, tok

size: artifact.size_in_bytes,

createdAt: artifact.created_at

? new Date(artifact.created_at)

- : undefined,

- digest: artifact.digest

+ : undefined

});

}

}

@@ -2602,18 +2325,14 @@ function listArtifactsInternal(latest = false) {

workflowJobRunBackendId

};

const res = yield artifactClient.ListArtifacts(req);

- let artifacts = res.artifacts.map(artifact => {

- var _a;

- return ({

- name: artifact.name,

- id: Number(artifact.databaseId),

- size: Number(artifact.size),

- createdAt: artifact.createdAt

- ? generated_1.Timestamp.toDate(artifact.createdAt)

- : undefined,

- digest: (_a = artifact.digest) === null || _a === void 0 ? void 0 : _a.value

- });

- });

+ let artifacts = res.artifacts.map(artifact => ({

+ name: artifact.name,

+ id: Number(artifact.databaseId),

+ size: Number(artifact.size),

+ createdAt: artifact.createdAt

+ ? generated_1.Timestamp.toDate(artifact.createdAt)

+ : undefined

+ }));

if (latest) {

artifacts = filterLatest(artifacts);

}

@@ -2725,7 +2444,6 @@ const generated_1 = __nccwpck_require__(49960);

const config_1 = __nccwpck_require__(74610);

const user_agent_1 = __nccwpck_require__(85164);

const errors_1 = __nccwpck_require__(38182);

-const util_1 = __nccwpck_require__(63062);

class ArtifactHttpClient {

constructor(userAgent, maxAttempts, baseRetryIntervalMilliseconds, retryMultiplier) {

this.maxAttempts = 5;

@@ -2778,7 +2496,6 @@ class ArtifactHttpClient {

(0, core_1.debug)(`[Response] - ${response.message.statusCode}`);

(0, core_1.debug)(`Headers: ${JSON.stringify(response.message.headers, null, 2)}`);

const body = JSON.parse(rawBody);

- (0, util_1.maskSecretUrls)(body);

(0, core_1.debug)(`Body: ${JSON.stringify(body, null, 2)}`);

if (this.isSuccessStatusCode(statusCode)) {

return { response, body };

@@ -3095,11 +2812,10 @@ var __importDefault = (this && this.__importDefault) || function (mod) {

return (mod && mod.__esModule) ? mod : { "default": mod };

};

Object.defineProperty(exports, "__esModule", ({ value: true }));

-exports.maskSecretUrls = exports.maskSigUrl = exports.getBackendIdsFromToken = void 0;

+exports.getBackendIdsFromToken = void 0;

const core = __importStar(__nccwpck_require__(42186));

const config_1 = __nccwpck_require__(74610);

const jwt_decode_1 = __importDefault(__nccwpck_require__(84329));

-const core_1 = __nccwpck_require__(42186);

const InvalidJwtError = new Error('Failed to get backend IDs: The provided JWT token is invalid and/or missing claims');

// uses the JWT token claims to get the

// workflow run and workflow job run backend ids

@@ -3148,74 +2864,6 @@ function getBackendIdsFromToken() {

throw InvalidJwtError;

}

exports.getBackendIdsFromToken = getBackendIdsFromToken;

-/**

- * Masks the `sig` parameter in a URL and sets it as a secret.

- *

- * @param url - The URL containing the signature parameter to mask

- * @remarks

- * This function attempts to parse the provided URL and identify the 'sig' query parameter.

- * If found, it registers both the raw and URL-encoded signature values as secrets using

- * the Actions `setSecret` API, which prevents them from being displayed in logs.

- *

- * The function handles errors gracefully if URL parsing fails, logging them as debug messages.

- *

- * @example

- * ```typescript

- * // Mask a signature in an Azure SAS token URL

- * maskSigUrl('https://example.blob.core.windows.net/container/file.txt?sig=abc123&se=2023-01-01');

- * ```

- */

-function maskSigUrl(url) {

- if (!url)

- return;

- try {

- const parsedUrl = new URL(url);

- const signature = parsedUrl.searchParams.get('sig');

- if (signature) {

- (0, core_1.setSecret)(signature);

- (0, core_1.setSecret)(encodeURIComponent(signature));

- }

- }

- catch (error) {

- (0, core_1.debug)(`Failed to parse URL: ${url} ${error instanceof Error ? error.message : String(error)}`);

- }

-}

-exports.maskSigUrl = maskSigUrl;

-/**

- * Masks sensitive information in URLs containing signature parameters.

- * Currently supports masking 'sig' parameters in the 'signed_upload_url'

- * and 'signed_download_url' properties of the provided object.

- *

- * @param body - The object should contain a signature

- * @remarks

- * This function extracts URLs from the object properties and calls maskSigUrl

- * on each one to redact sensitive signature information. The function doesn't

- * modify the original object; it only marks the signatures as secrets for

- * logging purposes.

- *

- * @example

- * ```typescript

- * const responseBody = {

- * signed_upload_url: 'https://example.com?sig=abc123',

- * signed_download_url: 'https://example.com?sig=def456'

- * };

- * maskSecretUrls(responseBody);

- * ```

- */

-function maskSecretUrls(body) {

- if (typeof body !== 'object' || body === null) {

- (0, core_1.debug)('body is not an object or is null');

- return;

- }

- if ('signed_upload_url' in body &&

- typeof body.signed_upload_url === 'string') {

- maskSigUrl(body.signed_upload_url);

- }

- if ('signed_url' in body && typeof body.signed_url === 'string') {

- maskSigUrl(body.signed_url);

- }

-}

-exports.maskSecretUrls = maskSecretUrls;

//# sourceMappingURL=util.js.map

/***/ }),

@@ -3322,7 +2970,7 @@ function uploadZipToBlobStorage(authenticatedUploadURL, zipUploadStream) {

core.info('Finished uploading artifact content to blob storage!');

hashStream.end();

sha256Hash = hashStream.read();

- core.info(`SHA256 digest of uploaded artifact zip is ${sha256Hash}`);

+ core.info(`SHA256 hash of uploaded artifact zip is ${sha256Hash}`);

if (uploadByteCount === 0) {

core.warning(`No data was uploaded to blob storage. Reported upload byte count is 0.`);

}

@@ -135845,7 +135493,7 @@ module.exports = index;

/***/ ((module) => {

"use strict";

-module.exports = JSON.parse('{"name":"@actions/artifact","version":"2.3.2","preview":true,"description":"Actions artifact lib","keywords":["github","actions","artifact"],"homepage":"https://github.com/actions/toolkit/tree/main/packages/artifact","license":"MIT","main":"lib/artifact.js","types":"lib/artifact.d.ts","directories":{"lib":"lib","test":"__tests__"},"files":["lib","!.DS_Store"],"publishConfig":{"access":"public"},"repository":{"type":"git","url":"git+https://github.com/actions/toolkit.git","directory":"packages/artifact"},"scripts":{"audit-moderate":"npm install && npm audit --json --audit-level=moderate > audit.json","test":"cd ../../ && npm run test ./packages/artifact","bootstrap":"cd ../../ && npm run bootstrap","tsc-run":"tsc","tsc":"npm run bootstrap && npm run tsc-run","gen:docs":"typedoc --plugin typedoc-plugin-markdown --out docs/generated src/artifact.ts --githubPages false --readme none"},"bugs":{"url":"https://github.com/actions/toolkit/issues"},"dependencies":{"@actions/core":"^1.10.0","@actions/github":"^5.1.1","@actions/http-client":"^2.1.0","@azure/storage-blob":"^12.15.0","@octokit/core":"^3.5.1","@octokit/plugin-request-log":"^1.0.4","@octokit/plugin-retry":"^3.0.9","@octokit/request-error":"^5.0.0","@protobuf-ts/plugin":"^2.2.3-alpha.1","archiver":"^7.0.1","jwt-decode":"^3.1.2","unzip-stream":"^0.3.1"},"devDependencies":{"@types/archiver":"^5.3.2","@types/unzip-stream":"^0.3.4","typedoc":"^0.25.4","typedoc-plugin-markdown":"^3.17.1","typescript":"^5.2.2"}}');

+module.exports = JSON.parse('{"name":"@actions/artifact","version":"2.2.2","preview":true,"description":"Actions artifact lib","keywords":["github","actions","artifact"],"homepage":"https://github.com/actions/toolkit/tree/main/packages/artifact","license":"MIT","main":"lib/artifact.js","types":"lib/artifact.d.ts","directories":{"lib":"lib","test":"__tests__"},"files":["lib","!.DS_Store"],"publishConfig":{"access":"public"},"repository":{"type":"git","url":"git+https://github.com/actions/toolkit.git","directory":"packages/artifact"},"scripts":{"audit-moderate":"npm install && npm audit --json --audit-level=moderate > audit.json","test":"cd ../../ && npm run test ./packages/artifact","bootstrap":"cd ../../ && npm run bootstrap","tsc-run":"tsc","tsc":"npm run bootstrap && npm run tsc-run","gen:docs":"typedoc --plugin typedoc-plugin-markdown --out docs/generated src/artifact.ts --githubPages false --readme none"},"bugs":{"url":"https://github.com/actions/toolkit/issues"},"dependencies":{"@actions/core":"^1.10.0","@actions/github":"^5.1.1","@actions/http-client":"^2.1.0","@azure/storage-blob":"^12.15.0","@octokit/core":"^3.5.1","@octokit/plugin-request-log":"^1.0.4","@octokit/plugin-retry":"^3.0.9","@octokit/request-error":"^5.0.0","@protobuf-ts/plugin":"^2.2.3-alpha.1","archiver":"^7.0.1","jwt-decode":"^3.1.2","unzip-stream":"^0.3.1"},"devDependencies":{"@types/archiver":"^5.3.2","@types/unzip-stream":"^0.3.4","typedoc":"^0.25.4","typedoc-plugin-markdown":"^3.17.1","typescript":"^5.2.2"}}');

/***/ }),

diff --git a/package-lock.json b/package-lock.json

index 75e253f..e6d252e 100644

--- a/package-lock.json

+++ b/package-lock.json

@@ -1,15 +1,15 @@

{

"name": "upload-artifact",

- "version": "4.6.2",

+ "version": "4.6.1",

"lockfileVersion": 2,

"requires": true,

"packages": {

"": {

"name": "upload-artifact",

- "version": "4.6.2",

+ "version": "4.6.1",

"license": "MIT",

"dependencies": {

- "@actions/artifact": "^2.3.2",

+ "@actions/artifact": "^2.2.2",

"@actions/core": "^1.11.1",

"@actions/github": "^6.0.0",

"@actions/glob": "^0.5.0",

@@ -34,10 +34,9 @@

}

},

"node_modules/@actions/artifact": {

- "version": "2.3.2",

- "resolved": "https://registry.npmjs.org/@actions/artifact/-/artifact-2.3.2.tgz",

- "integrity": "sha512-uX2Mr5KEPcwnzqa0Og9wOTEKIae6C/yx9P/m8bIglzCS5nZDkcQC/zRWjjoEsyVecL6oQpBx5BuqQj/yuVm0gw==",

- "license": "MIT",

+ "version": "2.2.2",

+ "resolved": "https://registry.npmjs.org/@actions/artifact/-/artifact-2.2.2.tgz",

+ "integrity": "sha512-UtS1kcINiPRkI3/hDKkO/XdrtKo89kn8s81J67QNBU6RRMWSSXrrfCCbQVThuxcdW2boOLv51NVCEKyo954A2A==",

"dependencies": {

"@actions/core": "^1.10.0",

"@actions/github": "^5.1.1",

@@ -7767,9 +7766,9 @@

},

"dependencies": {

"@actions/artifact": {

- "version": "2.3.2",

- "resolved": "https://registry.npmjs.org/@actions/artifact/-/artifact-2.3.2.tgz",

- "integrity": "sha512-uX2Mr5KEPcwnzqa0Og9wOTEKIae6C/yx9P/m8bIglzCS5nZDkcQC/zRWjjoEsyVecL6oQpBx5BuqQj/yuVm0gw==",

+ "version": "2.2.2",

+ "resolved": "https://registry.npmjs.org/@actions/artifact/-/artifact-2.2.2.tgz",

+ "integrity": "sha512-UtS1kcINiPRkI3/hDKkO/XdrtKo89kn8s81J67QNBU6RRMWSSXrrfCCbQVThuxcdW2boOLv51NVCEKyo954A2A==",

"requires": {

"@actions/core": "^1.10.0",

"@actions/github": "^5.1.1",

diff --git a/package.json b/package.json

index ec05284..68072a9 100644

--- a/package.json

+++ b/package.json

@@ -1,6 +1,6 @@

{

"name": "upload-artifact",

- "version": "4.6.2",

+ "version": "4.6.1",

"description": "Upload an Actions Artifact in a workflow run",

"main": "dist/upload/index.js",

"scripts": {

@@ -29,7 +29,7 @@

},

"homepage": "https://github.com/actions/upload-artifact#readme",

"dependencies": {

- "@actions/artifact": "^2.3.2",

+ "@actions/artifact": "^2.2.2",

"@actions/core": "^1.11.1",

"@actions/github": "^6.0.0",

"@actions/glob": "^0.5.0",

There is a trashcan icon that can be used to delete the artifact. This icon will only appear for users who have write permissions to the repository.

-The size of the artifact is denoted in bytes. The displayed artifact size denotes the size of the zip that `upload-artifact` creates during upload. The Digest column will display the SHA256 digest of the artifact being uploaded.

+The size of the artifact is denoted in bytes. The displayed artifact size denotes the size of the zip that `upload-artifact` creates during upload.

diff --git a/dist/merge/index.js b/dist/merge/index.js

index eabca8a..31792d4 100644

--- a/dist/merge/index.js

+++ b/dist/merge/index.js

@@ -824,7 +824,7 @@ __exportStar(__nccwpck_require__(49773), exports);

"use strict";

Object.defineProperty(exports, "__esModule", ({ value: true }));

-exports.ArtifactService = exports.DeleteArtifactResponse = exports.DeleteArtifactRequest = exports.GetSignedArtifactURLResponse = exports.GetSignedArtifactURLRequest = exports.ListArtifactsResponse_MonolithArtifact = exports.ListArtifactsResponse = exports.ListArtifactsRequest = exports.FinalizeArtifactResponse = exports.FinalizeArtifactRequest = exports.CreateArtifactResponse = exports.CreateArtifactRequest = exports.FinalizeMigratedArtifactResponse = exports.FinalizeMigratedArtifactRequest = exports.MigrateArtifactResponse = exports.MigrateArtifactRequest = void 0;

+exports.ArtifactService = exports.DeleteArtifactResponse = exports.DeleteArtifactRequest = exports.GetSignedArtifactURLResponse = exports.GetSignedArtifactURLRequest = exports.ListArtifactsResponse_MonolithArtifact = exports.ListArtifactsResponse = exports.ListArtifactsRequest = exports.FinalizeArtifactResponse = exports.FinalizeArtifactRequest = exports.CreateArtifactResponse = exports.CreateArtifactRequest = void 0;

// @generated by protobuf-ts 2.9.1 with parameter long_type_string,client_none,generate_dependencies

// @generated from protobuf file "results/api/v1/artifact.proto" (package "github.actions.results.api.v1", syntax proto3)

// tslint:disable

@@ -838,236 +838,6 @@ const wrappers_1 = __nccwpck_require__(8626);

const wrappers_2 = __nccwpck_require__(8626);

const timestamp_1 = __nccwpck_require__(54622);

// @generated message type with reflection information, may provide speed optimized methods

-class MigrateArtifactRequest$Type extends runtime_5.MessageType {

- constructor() {

- super("github.actions.results.api.v1.MigrateArtifactRequest", [

- { no: 1, name: "workflow_run_backend_id", kind: "scalar", T: 9 /*ScalarType.STRING*/ },

- { no: 2, name: "name", kind: "scalar", T: 9 /*ScalarType.STRING*/ },

- { no: 3, name: "expires_at", kind: "message", T: () => timestamp_1.Timestamp }

- ]);

- }

- create(value) {

- const message = { workflowRunBackendId: "", name: "" };

- globalThis.Object.defineProperty(message, runtime_4.MESSAGE_TYPE, { enumerable: false, value: this });

- if (value !== undefined)

- (0, runtime_3.reflectionMergePartial)(this, message, value);

- return message;

- }

- internalBinaryRead(reader, length, options, target) {

- let message = target !== null && target !== void 0 ? target : this.create(), end = reader.pos + length;

- while (reader.pos < end) {

- let [fieldNo, wireType] = reader.tag();

- switch (fieldNo) {

- case /* string workflow_run_backend_id */ 1:

- message.workflowRunBackendId = reader.string();

- break;

- case /* string name */ 2:

- message.name = reader.string();

- break;

- case /* google.protobuf.Timestamp expires_at */ 3:

- message.expiresAt = timestamp_1.Timestamp.internalBinaryRead(reader, reader.uint32(), options, message.expiresAt);

- break;

- default:

- let u = options.readUnknownField;

- if (u === "throw")

- throw new globalThis.Error(`Unknown field ${fieldNo} (wire type ${wireType}) for ${this.typeName}`);

- let d = reader.skip(wireType);

- if (u !== false)

- (u === true ? runtime_2.UnknownFieldHandler.onRead : u)(this.typeName, message, fieldNo, wireType, d);

- }

- }

- return message;

- }

- internalBinaryWrite(message, writer, options) {

- /* string workflow_run_backend_id = 1; */

- if (message.workflowRunBackendId !== "")

- writer.tag(1, runtime_1.WireType.LengthDelimited).string(message.workflowRunBackendId);

- /* string name = 2; */

- if (message.name !== "")

- writer.tag(2, runtime_1.WireType.LengthDelimited).string(message.name);

- /* google.protobuf.Timestamp expires_at = 3; */

- if (message.expiresAt)

- timestamp_1.Timestamp.internalBinaryWrite(message.expiresAt, writer.tag(3, runtime_1.WireType.LengthDelimited).fork(), options).join();

- let u = options.writeUnknownFields;

- if (u !== false)

- (u == true ? runtime_2.UnknownFieldHandler.onWrite : u)(this.typeName, message, writer);

- return writer;

- }

-}

-/**

- * @generated MessageType for protobuf message github.actions.results.api.v1.MigrateArtifactRequest

- */

-exports.MigrateArtifactRequest = new MigrateArtifactRequest$Type();

-// @generated message type with reflection information, may provide speed optimized methods

-class MigrateArtifactResponse$Type extends runtime_5.MessageType {

- constructor() {

- super("github.actions.results.api.v1.MigrateArtifactResponse", [

- { no: 1, name: "ok", kind: "scalar", T: 8 /*ScalarType.BOOL*/ },

- { no: 2, name: "signed_upload_url", kind: "scalar", T: 9 /*ScalarType.STRING*/ }

- ]);

- }

- create(value) {

- const message = { ok: false, signedUploadUrl: "" };